Tagline: Vendor bias is real, the benchmarks prove it, and the engineers who’ve figured out which model to use for which job are quietly lapping everyone else.

There’s a question I hear constantly in engineering circles: “Which AI should I use?”

The implicit assumption behind it is that there’s one right answer. Pick the best one, use it for everything, done. It’s how we think about most tools — you pick your IDE, your cloud provider, your language. You don’t swap between three of them mid-task.

But AI models aren’t like that. And the sooner you stop treating them like they are, the better your output gets.

I use six different AI tools in my workflow. Not because I enjoy managing subscriptions, but because each one is meaningfully better at a specific job — and the benchmarks, plus two years of daily use, back that up.

The vendor bias problem no one talks about

Most people pick their AI assistant the same way they pick a phone: brand loyalty, whatever their company pays for, or whatever the loudest voice in their team recommends.

The result is monoculture. One model, used for everything, never questioned. And because the model is capable enough to produce something — often something good-looking — it’s easy to miss that a different tool would have done the job better.

This isn’t hypothetical. Researchers at Nature Communications published findings earlier this year warning that AI is turning research into a “scientific monoculture” — homogenised outputs, shared blind spots, correlated failures. Gartner predicts that by 2028, 70% of organisations building multi-model applications will have AI gateway middleware specifically to avoid single-vendor dependency. LinkedIn reports that “model selection” is now one of the fastest-growing skills among senior engineers.

The engineers who’ve noticed the problem are moving. The ones who haven’t are wondering why their AI output feels the same as everyone else’s.

What the benchmarks actually say

Before I get into my specific workflow, let me give you the data that convinced me models aren’t interchangeable.

SWE-Bench Verified is the closest thing we have to a real-world software engineering test. Unlike HumanEval — which asks models to write isolated functions from scratch — SWE-Bench gives a model a real GitHub repository, a real bug report, and asks it to produce a fix. No hints about which files to look at. Multi-file edits. Tests written for the human fix, not for the AI. It’s what software engineers actually do.

The current top-line scores (as of April 2026, SWE-Bench Verified):

| Model | SWE-Bench Verified |

|---|---|

| Claude Opus 4.5/4.6 | ~80.9% |

| Claude Sonnet 4.6 | ~79.6% |

| GPT-5 | ~74.9% |

| Gemini 2.5 Pro | ~73.1% |

| Grok Code Fast | ~70.8% |

That’s a 10-point gap between the top and bottom. On tasks that represent real engineering work — navigating a codebase, diagnosing a root cause, making multi-file changes — that gap is not noise. It’s the difference between a model that resolves your bug and one that produces a plausible-looking patch that breaks something else.

But here’s what the leaderboard doesn’t tell you: SWE-Bench measures software delivery. It doesn’t measure research, design, ideation, or critique. The model that tops the coding benchmark isn’t necessarily the best tool for synthesising a market landscape or stress-testing an architecture decision.

That’s the bit that took me a while to learn. Different jobs. Different models.

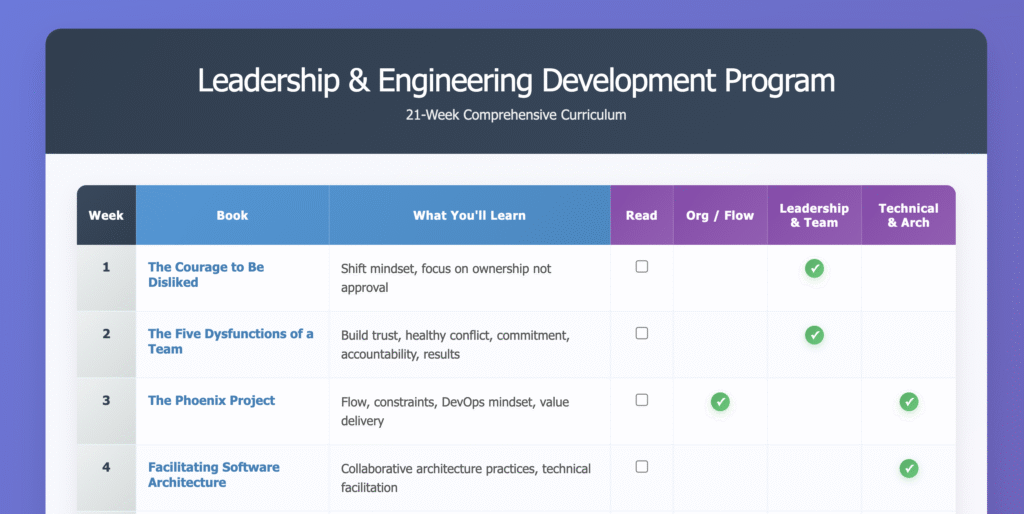

My workflow: six models, six jobs

Here’s what I actually use and why.

Gemini — broad context gathering

Google’s model has a context window large enough to be genuinely useful for research synthesis. When I need to understand a large domain quickly — technical landscape, regulatory environment, competitive positioning — Gemini handles breadth well. It connects across a lot of surface area without getting lost.

I don’t use it for precision work. But when I need to go wide before going deep, it’s the right first move.

Perplexity — external research

When I need current information with citations, Perplexity is in a different category. It retrieves, cites, and synthesises in one pass. Not a replacement for reading primary sources, but significantly faster for building a research base. The multi-model routing it now supports (running queries across GPT, Gemini, and Claude simultaneously) makes it even more useful as a research layer.

Claude Opus — design and architecture

This is where I spend the most time for high-stakes thinking. System design, architecture decisions, PRD writing, anything where the reasoning chain matters and I need a thinking partner who pushes back correctly rather than just agreeing.

Opus doesn’t just answer — it models the problem. It tells me when my framing is off. It proposes alternatives I hadn’t considered. For a 40-person engineering team where a bad architecture decision stays expensive for years, that’s worth paying for.

Grok — brutal second opinion

This one might surprise people. Grok’s personality is calibrated differently to the others. It has fewer soft edges. Where Claude will often find a way to be constructive about a bad idea, Grok will tell you it’s a bad idea.

I use it specifically as adversarial review. After I’ve built something or made a design decision with Opus, I take it to Grok and ask what’s wrong with it. The quality of the critique isn’t always higher — but the willingness to deliver one bluntly is, and that’s what I need at that stage.

Claude Sonnet — delivery

Most of the actual code gets written here. Fast, capable, good context retention across a session. The SWE-Bench gap between Sonnet and Opus is now less than 1.5 points, which means for standard implementation work, the speed and cost profile of Sonnet wins.

This is the model I’m in most of the day for Claude Code sessions. It does the work.

GitHub Copilot — peer review and pull request generation

Copilot lives in the IDE. It sees the diff, knows the repo history, and does line-by-line code review in context. For PR generation and review commentary, having it operate at the file level with access to the surrounding codebase is a genuine advantage over copy-pasting into a chat interface.

It’s not my primary reasoning engine. But for the last mile of code review before merge, it earns its place.

Is this just me?

No. The multi-model approach has crossed from experimental into mainstream.

Advanced AI users now average more than three different models daily, choosing specific tools by task type. McKinsey published an enterprise workflow guide this year built around model specialisation — triage models, reasoning models, execution models, each matched to a task profile. Microsoft launched a “Model Council” feature in Copilot that routes between GPT-5.4, Claude Opus, and Gemini simultaneously.

CIO magazine ran a piece earlier this year called “From vibe coding to multi-agent AI orchestration: Redefining software development”. That’s not a niche publication running a speculative take — that’s the mainstream enterprise audience catching up to where the practitioners already are.

The pattern has a name now: model tiering. Fast, cheap models handle routine work (routing, classification, summarisation). Mid-tier reasoning models handle standard implementation. Frontier models get reserved for complex design, not burned on things that don’t need them.

The case against (and why I still do it anyway)

It’s fair to push back on this. Managing six different tools has overhead: different interfaces, different pricing models, different context management, different strengths to remember. There’s a reasonable argument that the cognitive load of model selection erodes the time you’d gain from using the best tool.

My answer is that the overhead front-loads. After two years of daily use, I don’t consciously decide which model to use any more than I decide which muscle to use when I pick something up. The routing is automatic. The habit is built.

The bigger risk is the one I started with: monoculture. One bad vendor decision — a price hike, a terms change, a capability regression — and your entire AI-assisted workflow is down. I’ve spoken to engineers who migrated off a single provider three times in 18 months for exactly this reason. Diversification is resilience.

We’re building our own benchmark

Here’s the part where I have to be honest about something.

The SWE-Bench scores I quoted above are real and useful. But they’re increasingly gamed. Labs know what’s on the test. The scores keep going up. The real-world usefulness doesn’t always follow.

I’ve been building AIMOT — the AI Model Operational Test. Named after the UK’s annual MOT roadworthiness check: a practical, pass/fail fitness test that doesn’t care how the vehicle performed in a lab. It cares whether it’s safe to drive.

The design principle that changes everything: no human interpretation. Every test must be scoreable from the output alone — numerical answer within a defined tolerance, binary fact check, code that runs or doesn’t, schema validation. If I can’t define the scoring before seeing the output, the test is disqualified.

I built the v1 test suite by doing something stranger: I asked five frontier models to write the questions. All 75 candidate tests, five models, 15 each. Then I verified every expected answer by hand.

Two of the five models submitted tests with wrong expected answers. ChatGPT got an error propagation calculation wrong (6.93, not 10.00 as claimed). Copilot produced a logic problem where the “correct” answer wasn’t correct. Both stated their wrong answers with complete confidence.

A full post on AIMOT is coming. For now: if you want a benchmark that tests models on tasks that actually matter in professional work — quantitative reasoning, logical falsification, real code bugs, domain knowledge — that’s what it’s designed to do. And the first results run is about to happen.

The principle

The vendor bias problem isn’t about which model is best. It’s about assuming the answer is fixed.

Models have different strengths. The benchmarks measure some of them. Daily use reveals the rest. The engineers who treat model selection as a skill — who deliberately match tool to task — are producing better work than the ones who picked a default in 2024 and never revisited it.

That 10-point SWE-Bench gap is real. It compounds over time. And if you’re not running your own benchmark, someone else’s numbers are the best you’ve got.

AIMOT Pro v1 results are next. The full 28-test suite, the first model run, and the scores. No cherry-picking.